While HTC's upcoming Vive Cosmos and Oculus’ Quest are more eye-catching launches for those looking to invest in VR soon, HTC has launched some other fascinating forward-looking tech here at CES 2019.

The Vive Pro Eye brings eye-tracking to the previously-launched pro-level headset. And we have to say, it’s the future of VR and it will launch in the “second quarter” of 2019. Naturally, we've had a go so here's what we thought.

The Eye uses standard Vive hand controllers but the headset is similar to that of the Vive Pro - there are two cameras on the front - and can be wired or, eventually, wireless. The Eye requires a PC to work alongside as you'd expect.

Our quick take

Vive Pro Eye is clearly aimed at professional uses, but it points the way to an exciting future for VR – namely where the computer or headset can understand what you’re looking at and react accordingly. It will be interesting to hear how much it will cost over the coming months.

In its announcement, HTC also said that adding eye tracking to Vive Pro would help developers be able to better understand users by tracking eye movement – where people are looking – and there’s a lot to be said for that.

There’s also an accessibility argument where people are able to move their eyes but don’t have fine control of their hands.

HTC Vive Pro Eye initial

| FOR | AGAINST |

|---|---|

|

|

HTC Vive Pro Eye

How it works

- A short training session

- You can control menus

- Foveated rendering from Nvidia

Although controllers are still necessary for various tasks, we were guided through a set of demos that really did indicate that your eyes can control what you want to do, leaving your hands free to do other stuff and act more naturally. You can even control menus just by settling your eyes on the option you want. It really does work.

Our favourite demo was quite different from the others– the Major League Baseball (MLB) Home Run Derby VR game where you can control all the menus using your eyes while you hold and wield a baseball bat. It was the only pure gaming demo available.

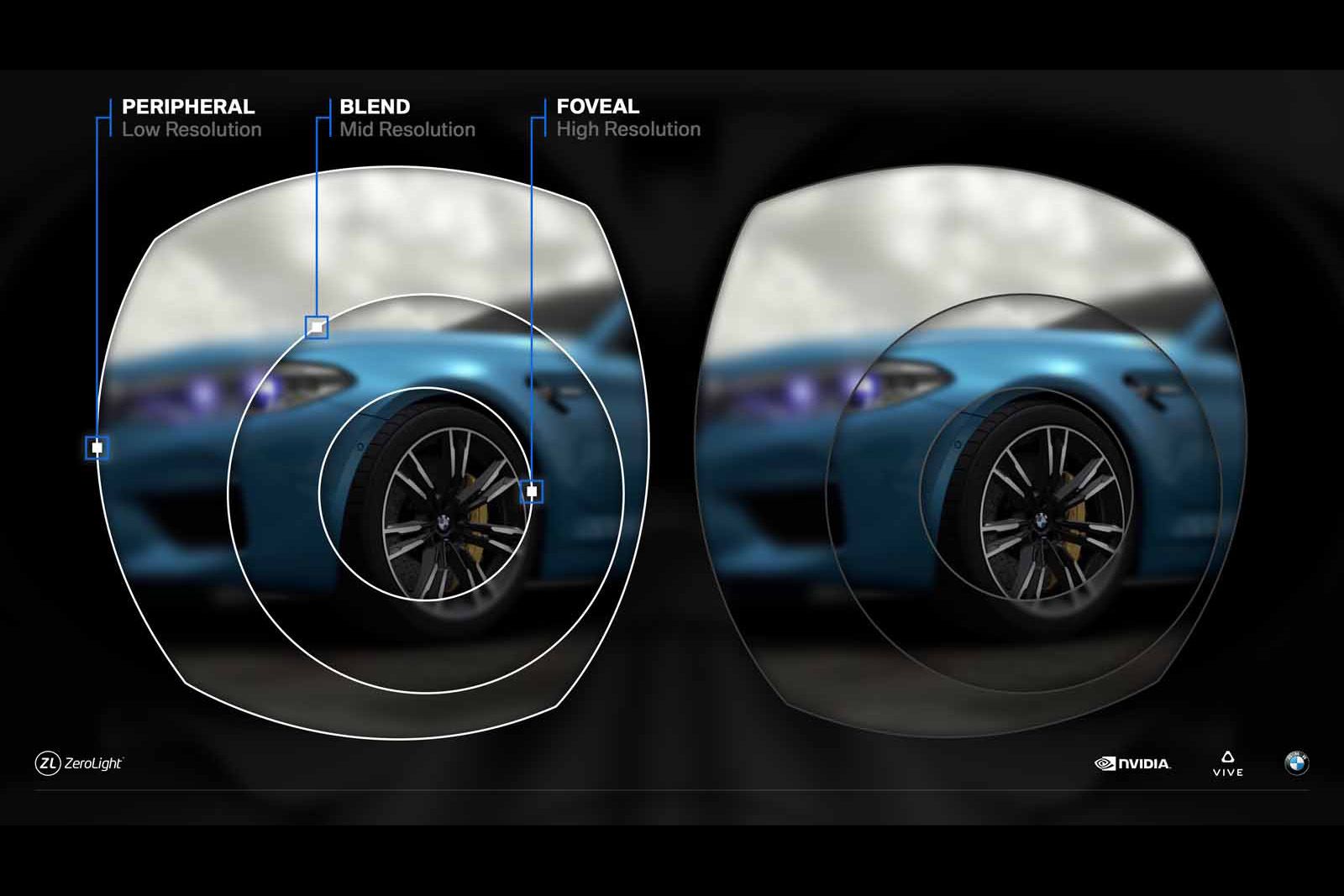

Vive has been working with Nvidia to enable foveated rendering on the headset. That means it renders different parts of the image at a lower resolution (your peripheral vision).

Where you're looking is rendered at a higher resolution. You don't notice it because your peripheral vision isn't as good.

Each demo started the same way, with a very short calibration process. All you need to do is focus on a few blue dots that appear across the screen and you're good to go. There's also the option to adjust your interpupillary distance.

It's a refreshing experience because all you need to do is look in the right place as you're directed to do so.

The demos we saw

- Lockheed Martin scenario gives a taster of flying a jet

- Learning to speak in public - without anybody present

- BMW marketing demo tracks what people see

Many of the scenarios we saw involved training situations. There was a public speaking demo where you were able to learn how to become a better speaker while another, developed by Lockheed Martin, gave us a taste what it was like to take off in a fighter jet.

VR is great for scenarios where it's impossible to put yourself in a particular live situation. The Ovation public speaking demo didn't use the eye-tracking tech but it's still a good example of where VR can be used - you're not going to get 100 people together just to hear yourself practice.

The Lockheed Martin plane demo is designed so a trainer can see where their charge was looking - are they paying attention to the right stuff, in other words.

Likewise a BMW marketing demo - the M Virtual Experience - can show where customers had interacted with what was on offer.

HTC Vive Pro Eye

To recap

Vive Pro Eye points the way to an exciting future for VR – namely where the computer or headset can understand what you’re looking at and react accordingly.