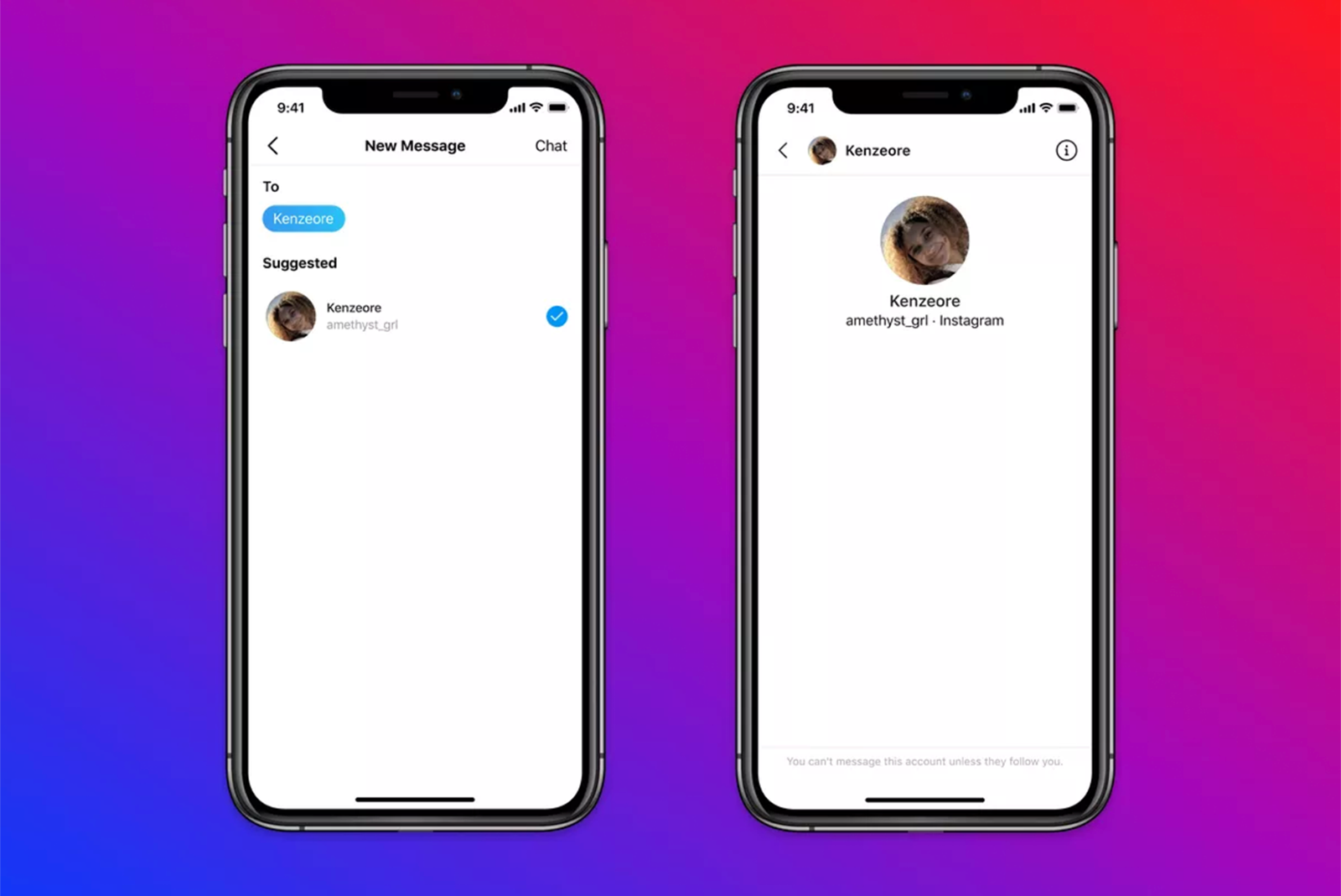

Facebook-owned Instagram is updating its policies to limit how teenagers and adults can interact on its platform, with the goal of making it safer for young users. For starters, the app has banned adults from direct messaging teenagers who don’t follow them.

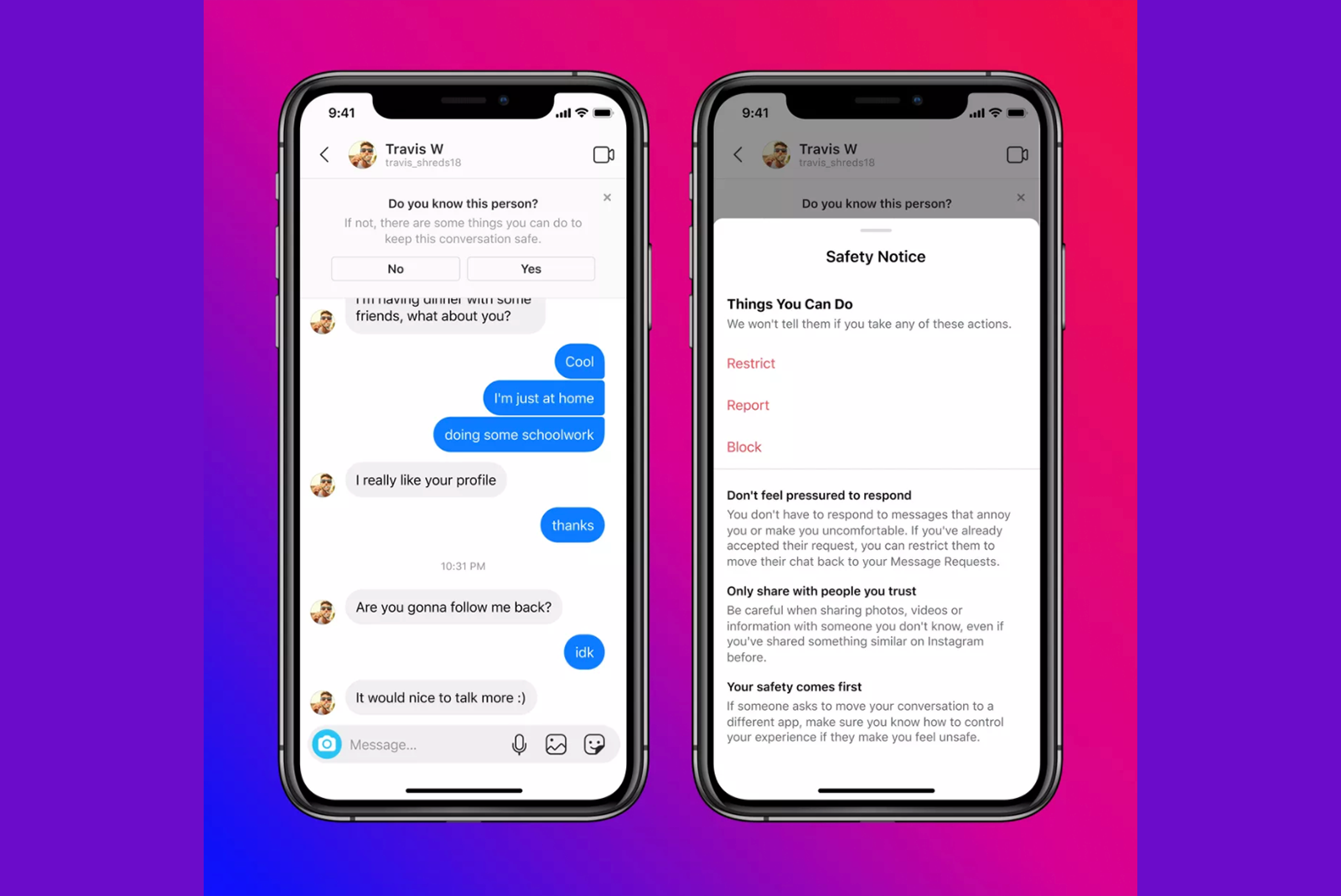

Instagram also announced it is introducing “safety prompts” that will appear to teenagers when they message adults who have been “exhibiting potentially suspicious behaviour". These prompts will give teenagers the option to report or block adults who are messaging them. They will also warn teenagers to “be careful sharing photos, videos, or information with someone you don’t know".

It's unclear how Instagram’s moderation system will detect suspicious behaviour from adult users, but Instagram defined an example of such behaviour as an adult user sending “a large amount of friend or message requests to people under 18”. Instagram is developing new “artificial intelligence and machine learning technology”, too, to detect someone’s age when they sign up for an account.

Instagram requires users to be at least 13, but it's easy to get around.

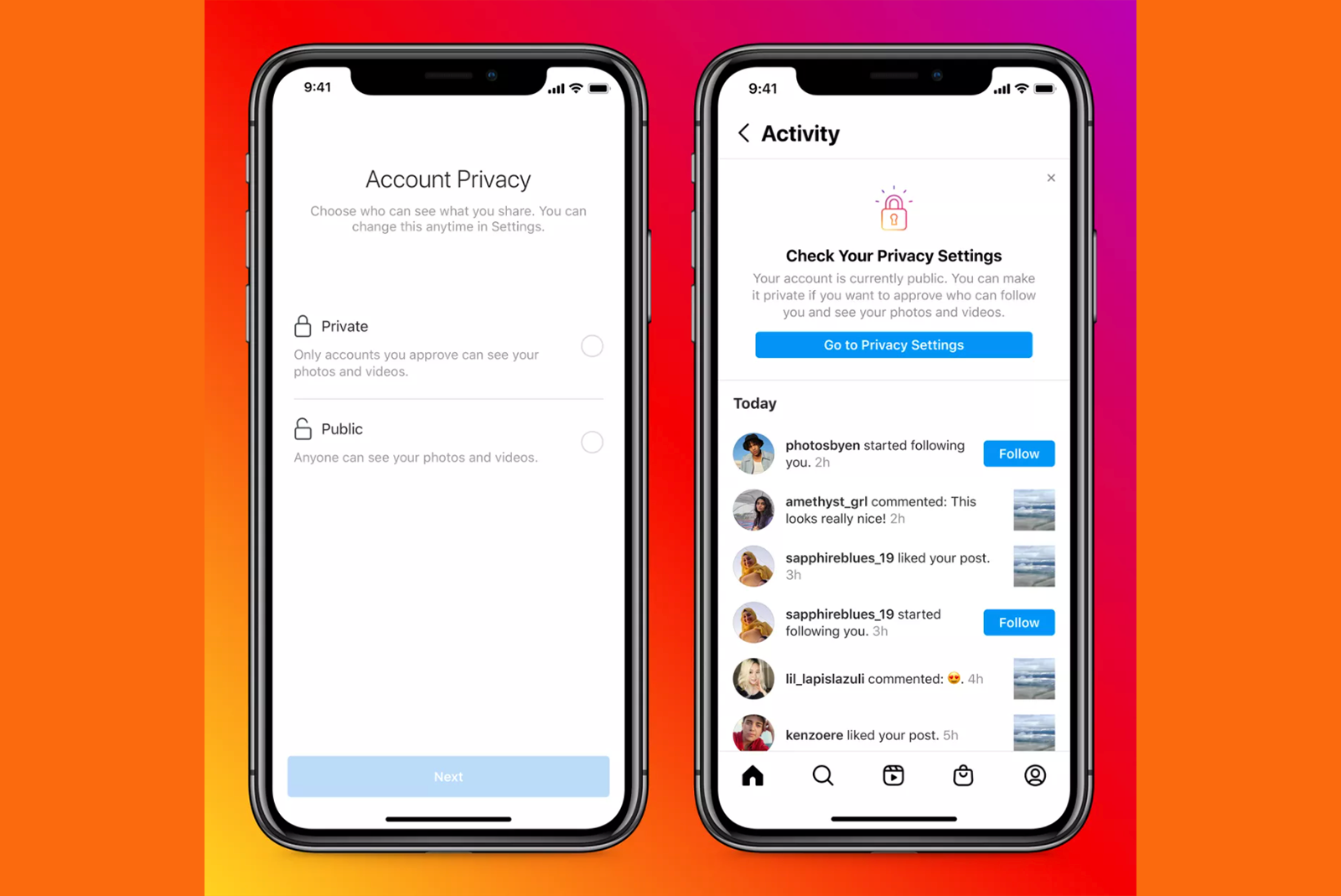

Going forward, new teenage users will be encouraged to make their profile private. Those who decide to create public accounts anyway will be reminded of the "benefits of a private account" and asked to check their settings.