Facebook-owned Instagram has rolled out a new caption feature aimed at tackling bullying on the platform. Here's how it works.

What's new with Instagram?

Starting 16 December, Instagram will notify people when their captions on a photo or video "may be considered offensive" - giving them the opportunity to "pause and reconsider their words before posting". In other words, it's launching an anti-bullying tool that is powered by AI.

Instagram said it "developed and tested" an AI that can "recognise different forms of bullying" on Instagram. It builds upon a feature from earlier this year that notifies users when their comments on photos and videos may be considered offensive before they’re posted. "Results have been promising, and we’ve found that these types of nudges can encourage people to reconsider their words", Instagram explained.

How does the feature work?

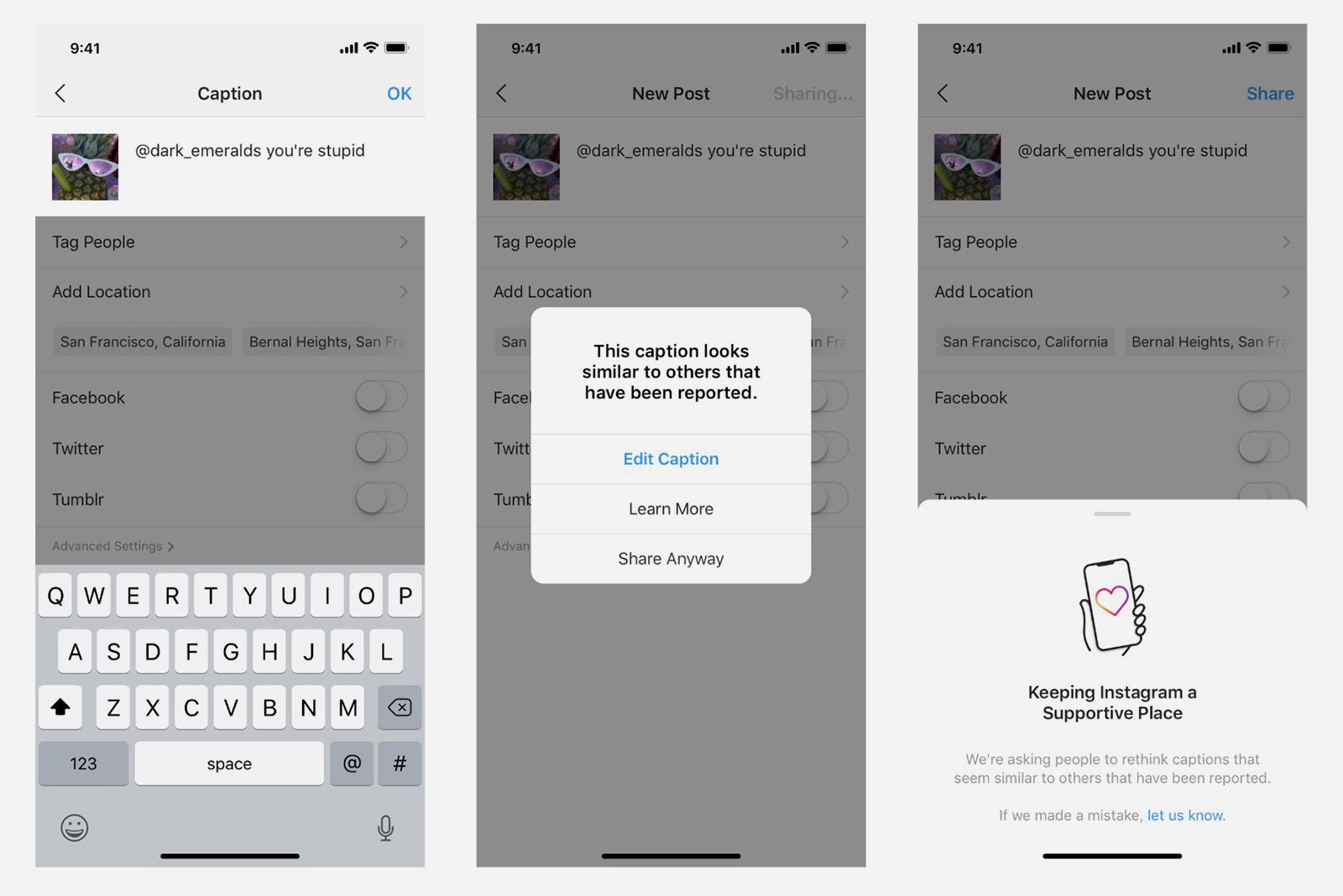

When you write a caption for an Instagram post, here's what will happen:

- Instagram's AI will determine whether it's potentially offensive.

- You may receive a prompt informing you is similar to those reported for bullying.

- You will have the opportunity to edit the caption before it’s posted.

- Or, you can share it anyway.

When will this be available?

Instagram said this caption feature is rolling out in select countries, and that it will begin expanding globally in the coming months.