Google Lens is an AI-powered technology that uses your smartphone camera and deep machine learning to not only detect an object in front of the camera lens, but understand it and offer actions such as scanning, translation, shopping, and more.

Lens was one of Google's biggest announcements in 2017, and a Google Pixel exclusive feature when that phone launched. Since then, Google Lens has come to the majority of Android devices - if you don't have it, then the app is available to download on Google Play.

What is Google Lens?

Google Lens enables you to point your phone at something, such as a specific flower, and then ask Google what the object is that you're pointing the camera at. You'll not only be told the answer, but you'll get suggestions based on the object, like nearby florists, in the case of a flower.

Other examples of what Google Lens can do include being able to take a picture of the SSID sticker on the back of a Wi-Fi router, after which your phone will automatically connect to the Wi-Fi network without you needing to do anything else. Yep, no more crawling under the cupboard in order to read out the password whilst typing it in your phone. Now, with Google Lens, you can literally point and shoot.

Google Lens will recognise restaurants, clubs, cafes, and bars, too, presenting you with a pop-up window showing reviews, address details and opening times. It's the ability to recognise everyday objects that's impressive. It will recognise a hand and suggest the thumbs up emoji, which is a bit of fun, but point it at a drink, and it will try and figure out what it is.

We tested this functionality with a glass of white wine. It didn't suggest white wine to us, but it did suggest a whole range of other alcoholic drinks, letting you then tap through to see what they are, how to make them, and so on. That shows that, while Lens is fast and clever, it's not always accurate.

We've also tested it with many garden plants and found it a really useful way of finding out what you have growing.

What can Google Lens do?

Aside from the scenarios described above, Google Lens offers the following features:

- QR code reading: Lens can read QR codes and give you corresponding links.

- Translate: You can point your phone at text and, with Google Translate plugging in, live translate text in front of your very eyes. This can also work offline.

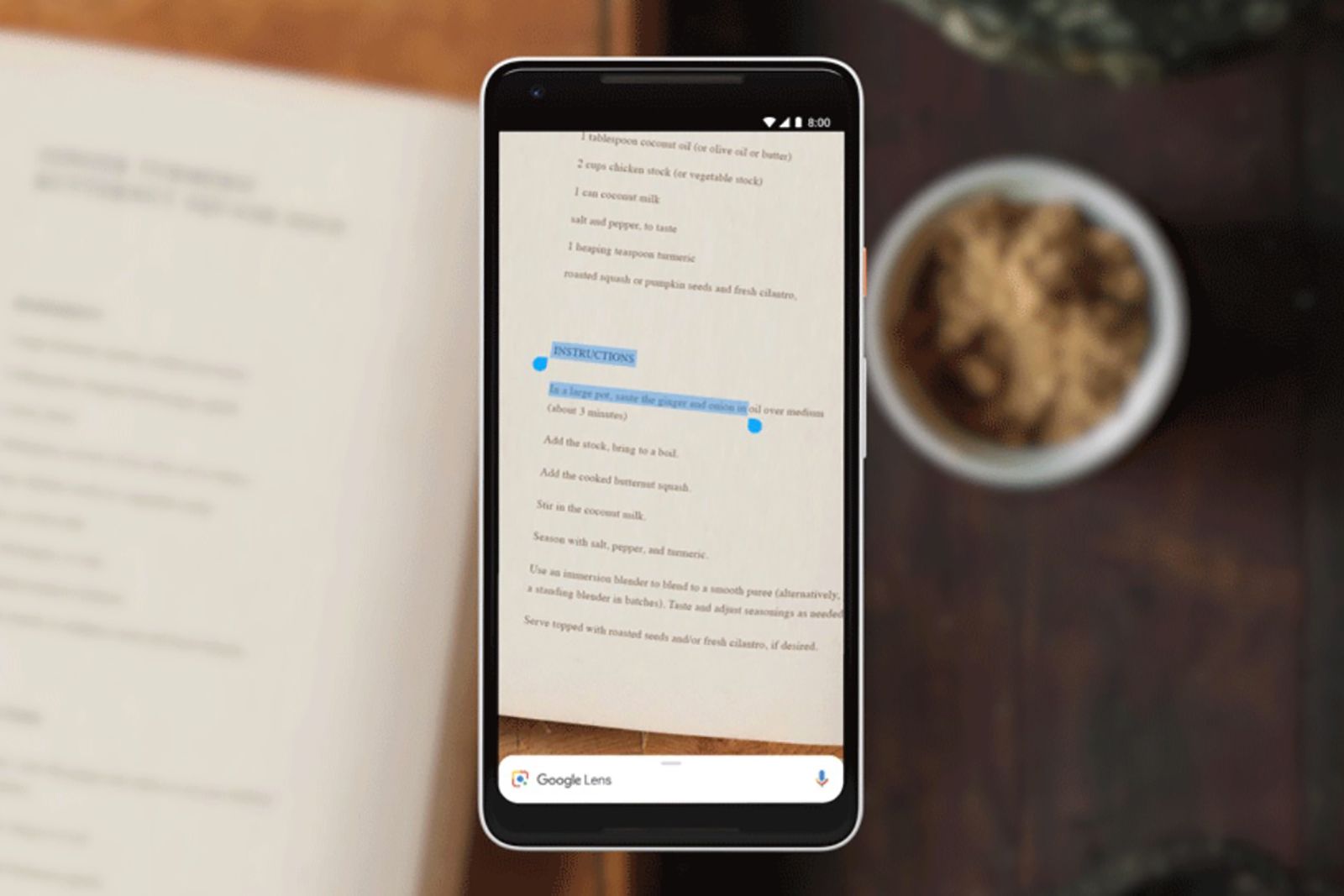

- Smart Text Selection: You can point your phone's camera at text, then highlight that text within Google Lens, and copy it to use on your phone. So, for instance, imagine pointing your phone at a Wi-Fi password and being able to copy/paste it into a Wi-Fi login screen.

- Smart Text search: When you highlight text in Google Lens, you can also search that text with Google. This is handy if you need to look up a definition of word, for instance.

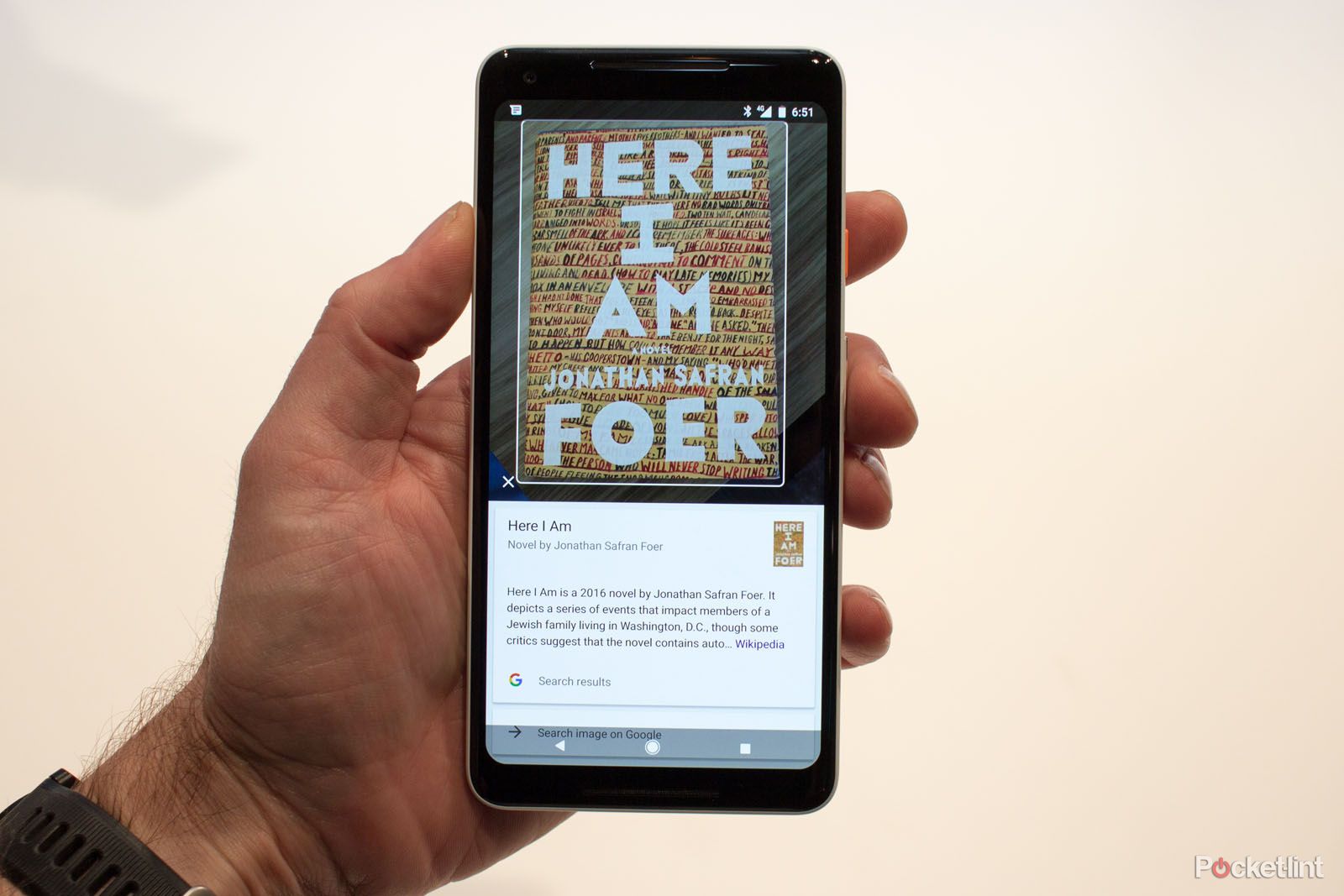

- Shopping: If you see a dress you like while shopping, Google Lens can identify that piece and similar articles of clothing. This works for just about any item you can think of, accessing shopping or reviews.

- Google homework questions: That's right, you can just scan the question and see what Google comes up with.

- Search around you: If you point your camera around you, Google Lens will detect and identify your surroundings. That might be details on a landmark or details about types of food - including recipes.

How does Google Lens work?

Google Lens app

Google has a standalone app on Android for Google Lens if you want to get straight into the features. You can access Google Lens through a whole range of other methods, as detailed below.

The experience is similar whichever approach you take; tapping the Lens icon in Google search bar takes you through to the same view you get directly in the Lens app.

Google Photos

Within Google Photos, Google Lens can identify buildings or landmarks, for instance, presenting users with directions and opening hours for them. It will also be able to present information on a famous work of art. Maybe it will solve the debate of whether the Mona Lisa is smiling or not.

When browsing your pictures in Google Photos, you'll see the Google Lens icon in the bottom of the window. Tapping on the icon will see the scanning dots appearing on you picture and then Google will serve up suggestions.

Camera app

In some Android phones Google Lens has been directly added to the device's own camera app. It might be in the 'More' section, but will differ depending on manufacturer and user interface. Some apps will use Google Lens to scan things like QR codes directly through the camera.

On the iPhone

If you want to access Google Lens on the iPhone you can get it via the Google app. This app covers a range of Google services which are native on Android devices. Once you've installed the app, you can head to the Google Lens section, grant permission for it to access your iPhone camera and off you go - you'll get all the features above.

Which devices offer Google Lens?

If you're an Android device user, you can access the app. However, there are some exceptions, such as the banned-from-Google-Services phones such as those from Huawei - so it's worth checking on Google Play to see if you can get it. It's also available on iPhone or iPad as detailed above.

Google Lens is also baked into other Google apps, like Chrome. Although it might not be immediately obvious, if you're using Chrome and you right click on an image to find out where it came from, the sidebar that opens is also Google Lens branded - so there are ways to use it outside mobile devices too.