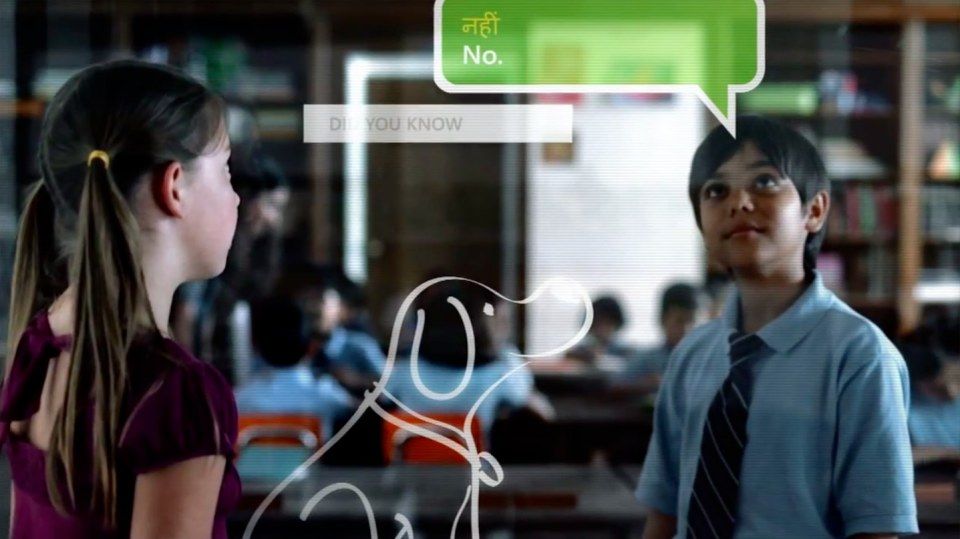

In 2009, Microsoft showed off a video in which it tried to predict the kinds of technology we would be using in the next five to ten years.

One of the ideas that starts its Productivity Future Vision 2009 video is the Magic Window: a pane of glass in your office or classroom that would let you talk to people on the other side of the window as if they were in the next room, even though in reality they could be on the other side of the world.

The 2009 video where the idea started

Video fantasies are one thing, but Microsoft is actually building it, and Pocket-lint visited the Edison lab, within the Studio B building at the company's headquarters in Seattle, to find out more.

A see-through computer

If you think for a moment about the level of complexity that is needed to create a see-through computer, you start to realise the task ahead for Stevie Bathiche, director of research at Microsoft's applied sciences lab.

A piece of glass doesn't allow for wires, doesn't allow for cameras, nor does it allow for sensors, or projections. It's is the "North Star" of what he is working on, as he explains it to Pocket-lint.

The wedge lens

So how do you go about creating a see-through computer? You start, Bathiche explains, with a very flat wedge-like lens that allows you to shine images upwards to be projected outwards.

Light shines through the Wedge lens

This lens sits behind a display and captures information, relaying it to a sensor in the base of the display. Because of the angle of the lens the computer can effectively see and record what is happening in front of the screen.

It's the same approach that a submarine periscope uses, but enhanced to fit in a flat lens rather than something a lot more boxy.

Once you've got the lens you can then start using it to great effect, but that's only one part of the story.

Displaying information on a screen

According to Bathiche this bit isn't that hard, although it is tricky. Simply putting a camera behind a transparent display and then recording the person in front of the screen isn't as easy as it sounds because you have to remove the echo of the screen you are filming through.

If you don't you simply get what the user's looking at in reverse, dominating the image. Through a series of other techniques, Microsoft has worked out how to trick the camera into not recording display, but merely what is on the other side of it (you).

Bathiche looks straight into the screen and camera, while the screen to the right shows it working

Trying hard to understand at times mind-boggling technology, we believe it works in the same way 3D active glasses do, by quickly showing you a left image and a right image and then letting your brain be convinced that they are the same thing. It is the same here, but with the computer being forced to be confused, not you - you don't need to wear glasses.

By removing the video echo, Microsoft has been able to create a display that is flat and see-through, but inexpensive, and which works regardless of size - a current drawback for transparent OLED technologies being touted by Samsung.

A Kinect sensor built in the screen tracks eye movement and shows each player different video on the same screen

In practical use, this part of the technology has the clearest use case, especially considering Microsoft owns video chat service Skype.

Imagine talking to others you are chatting with on your television, tablet or laptop, simply by looking into the screen rather than having the awkward viewing angle of looking into a webcam positioned to the top or to the side. It makes a lot of sense.

Understanding gestures

Microsoft believes in a gesture-driven world for its computer interfaces and those gestures vary depending on the context. You only have to look at Windows 8 and the Kinect sensor for the Xbox 360 to see that gesture, whether it is touch or movement, is a key to how Microsoft believes we will interact with computers in the future.

To do that you need a Kinect sensor (or similar) in the Magic Window concept too. Having solved the display issues, and the video echo recording issues, Stevie Bathiche and his team have solved monitoring your gesture problem too.

Remember, unlike Kinect, for the Magic Window concept to work you can't have a mounted Kinect monitoring everything from behind or in front of the user, it needs to be in the glass itself.

With a camera and Kinect sensor built in to the display it can track hand movement in front of the display

In comes the wedge design again, tracking movement over the screen - be it your eyes, your face or your hands - is going to be important for a number of aspects of the project to work.

Where the technology starts to get really clever is that it can determine where you are in relation to the glass and therefore relay that information accordingly. That means it can be tracking your head to show you a specific image that is different fro an image being shown to someone else in the room or know that you are waving your hands in front of the screen to gesture a new page or to control something specific - in the case of our demos stopping a waterfall hitting a leaf while using another hand to brush the leaf along, even though we never touched the screen.

The screen to the right shows that the screen can see the users hand

Gesture technology is already starting to appear in consumer available products. Leap Motion, Samsung, Toshiba and others are on the cusp of letting us control our phones and our computers by looking or moving. Microsoft's very own Kinect sensor lets you do similar things, but as Bathiche explains to us, not in the same level of detail.

The Magic Window

The final challenge is to allow the computer to track what you see as you move your head, as if you were looking through a glass window frame.

Again it's not as easy as it sounds, because the angle of what you see changes as you move your head - try it now out of a nearby window and you'll instantly see the problems you need to solve. Moving to the right in real life allows you to get a viewpoint further to the left, while looking up lets you see more of the ceiling, for example.

Here the team has used a Kinect sensor to track your eye movement and then relay an image of what you are seeing in the other room on to the screen. That instantly gives you the impression of peering into another room and is held back only by the quality of the video you are seeing - 4K will solve this, however.

In practical terms the technology could also be used to display 3D images without the need for glasses – something they called “Steerable AutoStereo 3-D Display”. They use a the Wedge technology behind an LCD monitor to direct a narrow beam of light into each of a viewer’s eyes.

By using a Kinect head tracker, the user’s relation to the display is tracked, and thereby, the prototype is able to “steer” that narrow beam to the user.

The combination creates a 3-D image that is steered to the viewer without the need for glasses or holding your head in place.

It's the final piece of the puzzle says Bathiche, and the one that is needed the most to give you the idea that you are just on the other side of a piece of glass rather than another monitor.

Tomorrow, today

Creating what is in the video is Bathiche's holy grail, and one that he seems to have achieved in several rudimentary prototypes in the Edison Lab. At the moment the tech is still in the lab. It is still bundles of wires, raw systems, and individual parts that are no way near ready for consumers like you and us.

But remember, this is concept, a part of Microsoft Envisioning video, is branded as technology that is five to ten years out. Given what we have seen, that goal appears to be incredibly achievable, and we suspect parts of the technology will come even sooner.

While Magic Windows are unlikely to be common in the work place for us just yet, expect your young kids to be using them to do business in the future. Window cleaners, your life is about to get a whole lot busier.